用 Gemini TTS 生成音频故事

最终,我选择了 Google Gemini、Google TTS 和 Cloudflare R2来实现从文本生成音频故事。

微信 ezpoda免费咨询:AI编程 | AI模型微调| AI私有化部署

AI模型价格对比 | AI工具导航 | ONNX模型库 | Tripo 3D | Meshy AI | ElevenLabs | KlingAI | ArtSpace | Phot.AI | InVideo

我目前正在做一个关于语言学习的副项目。主要功能包括使用 AI 生成内容以及将文本转换为音频文件。为了存储音频文件,我还需要云存储。

成本是我的首要考虑因素,因为我认为在云平台之间切换不会太困难。

最终,我选择了 Google Gemini、Google TTS 和 Cloudflare R2。它们提供了 API 文档和示例,但我发现有些部分不够完善,所以我决定写一篇文章来分享。我使用的是 Go 语言,本文只涵盖基本用法。

对于 Google Gemini 和 TTS,我使用 RESTful API。虽然它们提供了库,但我发现使用 RESTful API 比设置库更方便。

1. Google Gemini

package api

import (

"bytes"

"encoding/json"

"fmt"

"io"

"net/http"

"github.com/spf13/viper"

)

type Part struct {

Text string `json:"text"`

}

type Content struct {

Parts []Part `json:"parts"`

}

type Candidates struct {

Content Content `json:"content"`

}

type PromptResult struct {

Candidates []Candidates `json:"candidates"`

}

func Prompt(prompt string) (*PromptResult, error) {

// I use viper to manage environment variables, you can replace this with your api key.

url := fmt.Sprintf("https://generativelanguage.googleapis.com/v1beta/models/gemini-2.0-flash:generateContent?key=%s", viper.Get("GOOGLE_CLOUD_API_KEY"))

// In this example, it sends only one prompt but you can send more information

data, err := json.Marshal(map[string]interface{}{

"contents": []map[string]interface{}{{

"parts": []map[string]interface{}{{

"text": prompt,

}},

}},

})

if err != nil {

return nil, err

}

req, err := http.NewRequest("POST", url, bytes.NewBuffer(data))

if err != nil {

return nil, err

}

req.Header.Add("Content-Type", "application/json")

// Request the API

res, err := http.DefaultClient.Do(req)

if err != nil {

return nil, err

}

defer res.Body.Close()

resBody, err := io.ReadAll(res.Body)

if err != nil {

return nil, err

}

var promptResult PromptResult

// Parse the result

err = json.Unmarshal(resBody, &promptResult)

if err != nil {

return nil, err

}

return &promptResult, nil

}

package main

import (

"fmt"

"github.com/hsk-kr/tutorial/lib/api"

"github.com/spf13/viper"

)

func main() {

viper.SetConfigFile(".env")

viper.ReadInConfig()

promptResult, _ := api.Prompt("Generate a short story for kids")

fmt.Println(promptResult.Candidates[0].Content.Parts[0].Text)

}

您可以在以下链接找到有关 API 的更多详细信息:https://ai.google.dev/gemini-api/docs。

在此示例中,发送了一个提示并接收了响应。响应包含的内容不仅仅是文本,但为了简单起见,我定义的结构体只处理文本并将其打印到控制台。

我使用 Viper 来管理环境变量,但您可以通过将 API 密钥替换为您自己的来测试代码。

2. Google TTS

package api

import (

"bytes"

"encoding/base64"

"encoding/json"

"errors"

"fmt"

"io"

"net/http"

"strings"

"github.com/spf13/viper"

)

type Voice struct {

LanguageCodes []string `json:"languageCodes"`

Name string `json:"name"`

SsmlGender Gender `json:"ssmlGender"` // "MALE" or "FEMALE"

NaturalSampleRateHertz int `json:"naturalSampleRateHertz"`

}

type VoiceSelectionParam struct {

LanguageCode string `json:"languageCode"`

Name string `json:"name"`

SsmlGender Gender `json:"ssmlGender"`

}

type VoicesResponse struct {

Voices []Voice `json:"voices"`

}

type AudioEncoding string

type Gender string

const (

MALE Gender = "MALE"

FEMALE Gender = "FEMALE"

)

const (

LINEAR16 AudioEncoding = "LINEAR16"

MP3 AudioEncoding = "MP3"

OGG_OPUS AudioEncoding = "OGG_OPUS"

MULAW AudioEncoding = "MULAW"

ALAW AudioEncoding = "ALAW "

)

func convertVoiceToVoiceSelectionParam(voice Voice) (*VoiceSelectionParam, error) {

voiceSelectionParam := new(VoiceSelectionParam)

if voice.LanguageCodes == nil || len(voice.LanguageCodes) <= 0 {

return nil, errors.New("Empty LanguageCodes")

}

voiceSelectionParam.LanguageCode = voice.LanguageCodes[0]

voiceSelectionParam.Name = voice.Name

voiceSelectionParam.SsmlGender = voice.SsmlGender

return voiceSelectionParam, nil

}

func GetVoiceList(languageCode string) ([]Voice, error) {

url := fmt.Sprintf("https://texttospeech.googleapis.com/v1/voices?languageCode=%s&key=%s", languageCode, viper.GetString("GOOGLE_CLOUD_API_KEY"))

req, err := http.NewRequest("GET", url, nil)

if err != nil {

return nil, err

}

req.Header.Add("Content-Type", "application/json")

res, err := http.DefaultClient.Do(req)

if err != nil {

return nil, err

}

defer res.Body.Close()

resBody, err := io.ReadAll(res.Body)

if err != nil {

return nil, err

}

var voicesRes VoicesResponse

err = json.Unmarshal(resBody, &voicesRes)

if err != nil {

return nil, err

}

return voicesRes.Voices, nil

}

func ConvertTextToAudio(input string, voice Voice) ([]byte, error) {

url := fmt.Sprintf("https://texttospeech.googleapis.com/v1/text:synthesize?key=%s", viper.GetString("GOOGLE_CLOUD_API_KEY"))

voiceSelectionParam, err := convertVoiceToVoiceSelectionParam(voice)

if err != nil {

return nil, err

}

data, err := json.Marshal(map[string]interface{}{

"input": map[string]string{"text": input},

"voice": voiceSelectionParam,

"audioConfig": map[string]string{"audioEncoding": string(OGG_OPUS)},

})

if err != nil {

return nil, err

}

req, err := http.NewRequest("POST", url, bytes.NewBuffer(data))

if err != nil {

return nil, err

}

req.Header.Add("Content-Type", "application/json")

res, err := http.DefaultClient.Do(req)

if err != nil {

return nil, err

}

defer res.Body.Close()

body, err := io.ReadAll(res.Body)

if err != nil {

return nil, err

}

var result map[string]interface{}

if err := json.Unmarshal(body, &result); err != nil {

return nil, err

}

audioContent, ok := result["audioContent"].(string)

if !ok {

return nil, fmt.Errorf("No audio content found in response")

}

audioData, err := base64.StdEncoding.DecodeString(audioContent)

if err != nil {

return nil, err

}

return audioData, nil

}

func getFirstXVoice(voices []Voice, strToFind string, gender Gender) *Voice {

for i, v := range voices {

if strings.Contains(strings.ToLower(v.Name), strToFind) && v.SsmlGender == gender {

return &voices[i]

}

}

return nil

}

func GetFirstStandardVoice(voices []Voice, gender Gender) *Voice {

return getFirstXVoice(voices, "standard", gender)

}

func GetFirstWavenetVoice(voices []Voice, gender Gender) *Voice {

return getFirstXVoice(voices, "wavenet", gender)

}

func GetFirstNeuralVoice(voices []Voice, gender Gender) *Voice {

return getFirstXVoice(voices, "neural", gender)

}

package main

import (

"fmt"

"os"

"github.com/hsk-kr/tutorial/lib/api"

"github.com/spf13/viper"

)

func main() {

viper.SetConfigFile(".env")

viper.ReadInConfig()

voices, _ := api.GetVoiceList("en-US")

voice := api.GetFirstWavenetVoice(voices, "MALE")

bAudio, _ := api.ConvertTextToAudio("By the way, I am using neovim.", *voice)

os.WriteFile("./audio.opus", bAudio, 0644)

}

运行程序后,您会在同一目录下找到名为 audio.opus 的音频文件。

共有三个函数:

- GetVoiceList – 获取 API 支持的语音列表。

- GetFirstWavenetVoice – 截至 2025 年 2 月 27 日,共有三种类型的语音:wavenet、standard 和 neural。每种类型包含多个语音,但由于这不是我的优先考虑,我创建了一个函数来简单地获取给定类型的第一个语音。

- ConvertTextToAudio – 以文本和语音作为参数,返回结果为 []byte。该函数请求 OGG_OPUS 格式的音频文件,因为我计划在 Web 环境中使用它。但是,您可以使用任何支持的格式。

如果您仔细阅读文档,可能会发现它缺少一些重要的细节。例如,我遇到了 audio_encoding 参数,想检查支持哪些格式,但没有找到直接链接到该信息的页面。我认为文档可以改进——当我搜索音频编码文档的链接时,一无所获,只有纯黑色文本。最终,我通过在文档顶部手动搜索找到了该文档。

3. Cloudflare R2

package api

import (

"bytes"

"context"

"errors"

"fmt"

"time"

"github.com/aws/aws-sdk-go-v2/aws"

"github.com/aws/aws-sdk-go-v2/config"

"github.com/aws/aws-sdk-go-v2/credentials"

"github.com/aws/aws-sdk-go-v2/feature/s3/manager"

"github.com/aws/aws-sdk-go-v2/service/s3"

"github.com/google/uuid"

"github.com/spf13/viper"

)

type Storage struct {

client *s3.Client

uploader *manager.Uploader

bucketName string

}

func (s *Storage) Init() error {

cfg, err := config.LoadDefaultConfig(context.TODO(),

config.WithCredentialsProvider(credentials.NewStaticCredentialsProvider(viper.GetString("CLOUDFLARE_R2_ACCESS_KEY_ID"), viper.GetString("CLOUDFLARE_R2_SECRET_ACCESS_KEY"), "")),

config.WithRegion("auto"),

)

if err != nil {

return err

}

s.bucketName = viper.GetString("CLOUDFLARE_R2_BUCKET_NAME")

s.client = s3.NewFromConfig(cfg, func(o *s3.Options) {

o.BaseEndpoint = aws.String(fmt.Sprintf("https://%s.r2.cloudflarestorage.com", viper.GetString("CLOUDFLARE_R2_ACCOUNT_ID")))

})

s.uploader = manager.NewUploader(s.client)

return nil

}

func (s *Storage) Put(data []byte) (string, error) {

if s.client == nil {

return "", errors.New("client is nil")

}

objectKey, err := uuid.NewUUID()

if err != nil {

return "", err

}

bucket := aws.String(s.bucketName)

key := aws.String(objectKey.String())

ctx := context.Background()

input := &s3.PutObjectInput{

Bucket: bucket,

Key: key,

Body: bytes.NewReader(data),

}

output, err := s.uploader.Upload(ctx, input)

if err != nil {

return "", err

}

err = s3.NewObjectExistsWaiter(s.client).Wait(ctx, &s3.HeadObjectInput{

Bucket: bucket,

Key: key,

}, time.Minute)

if err != nil {

return "", err

}

return *output.Key, nil

}

package main

import (

"github.com/tutorial/justsayit/lib/api"

"github.com/spf13/viper"

)

func main() {

viper.SetConfigFile(".env")

viper.ReadInConfig()

voices, _ := api.GetVoiceList("en-US")

voice := api.GetFirstWavenetVoice(voices, "MALE")

bAudio, _ := api.ConvertTextToAudio("By the way, I am using neovim.", *voice)

storage := new(api.Storage)

storage.Init()

storage.Put(bAudio)

}

Cloudflare R2 类似于 Amazon S3,因此您可以使用 AWS S3 库来操作其 API。

您可以在 AWS 文档中找到更多示例:https://docs.aws.amazon.com/code-library/latest/ug/go_2_s3_code_examples.html。

由于在将对象上传到云端之前需要创建一些实例,我在结构体中定义了必要的方法。

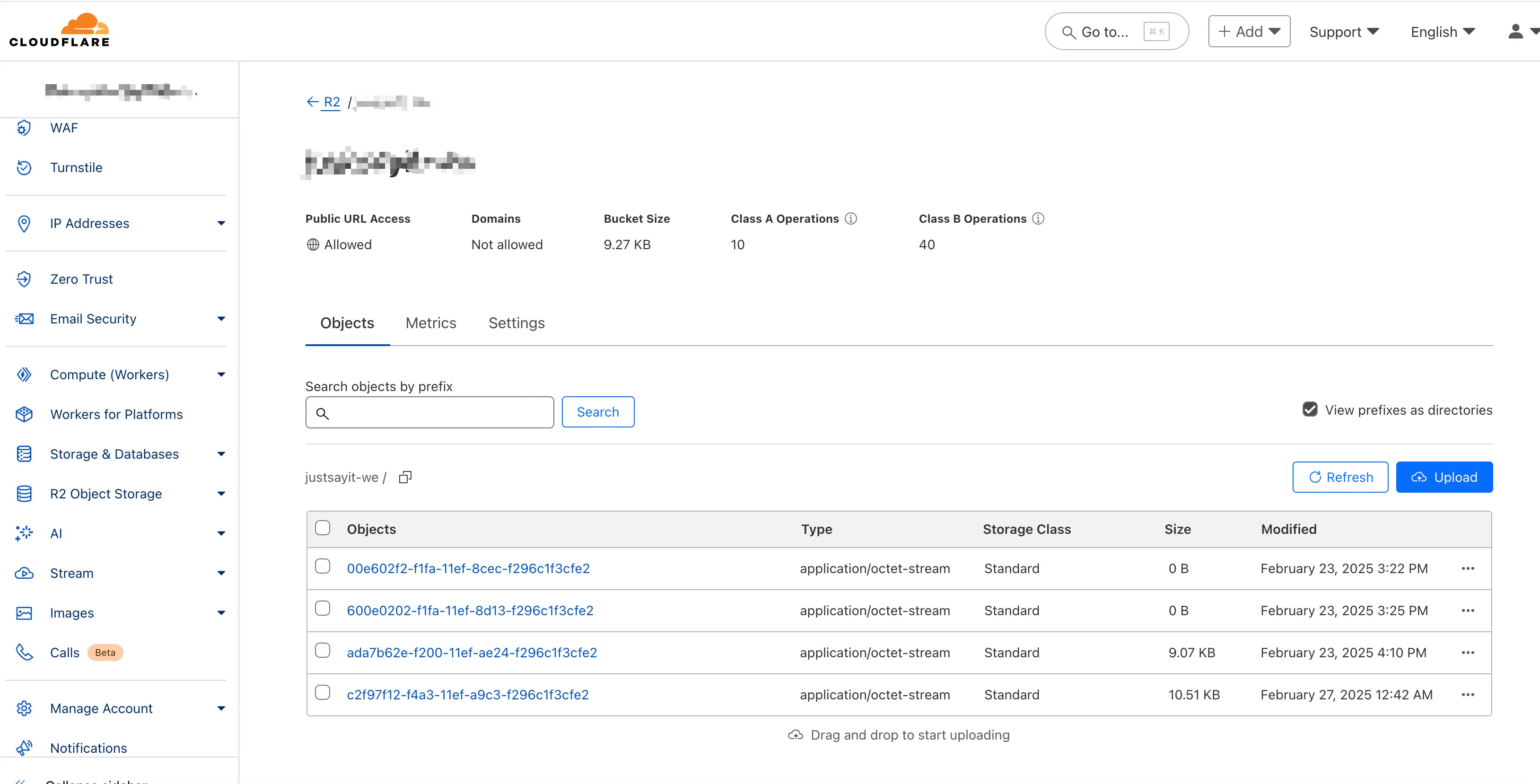

运行程序后,您应该能看到对象成功上传到 Cloudflare。

为了测试音频文件是否能在 Web 环境中正确加载,我将对象 URL 放在了标签中——但没有起作用。原来,即使我已将文件设置为公开访问,仍然需要使用代理来访问它。从安全角度来看这是合理的,因为必须在设置中明确允许访问。

如果您想临时访问文件进行测试,可以启用开发模式并使用开发链接。

4. 最终代码

package main

import (

"github.com/tutorial/justsayit/lib/api"

"github.com/spf13/viper"

)

func main() {

viper.SetConfigFile(".env")

viper.ReadInConfig()

promptResult, _ := api.Prompt("Say something short in German")

generatedText := promptResult.Candidates[0].Content.Parts[0].Text

voices, _ := api.GetVoiceList("de-DE")

voice := api.GetFirstWavenetVoice(voices, "MALE")

bAudio, _ := api.ConvertTextToAudio(generatedText, *voice)

storage := new(api.Storage)

storage.Init()

storage.Put(bAudio)

}

这就是最终代码:使用 Google Gemini 生成文本,将文本转换为音频文件,并将音频文件存储在云平台中。

原文链接: Go Simple Example: Generate Audio Stories with Google Gemini, TTS, and Cloudflare R2

汇智网翻译整理,转载请标明出处